| Table of Contents |

|---|

...

Build Base Image

Install

...

Docker

>

...

sudo

...

yum

...

check-update

> sudo curl -fsSL https://get.docker.com/ | sh

> sudo systemctl start docker

> sudo systemctl status docker

> sudo systemctl enable docker

> sudo usermod -aG docker $(whoami)

> sudo reboot

Install Kubernetes

| Code Block |

|---|

sudo su

|

> sudo systemctl enable sshd

> sudo systemctl start sshd

Install Docker

> yum install docker

Install Kubernetes

| Code Block |

|---|

cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://packages.cloud.google.com/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://packages.cloud.google.com/yum/doc/yum-key.gpg https://packages.cloud.google.com/yum/doc/rpm-package-key.gpg exclude=kube* EOF # Set SELinux in permissive mode (effectively disabling it) setenforce 0 sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetes systemctl enable --now kubelet |

Pull images

> sudo kubeadm config images pull

| Code Block |

|---|

[config/images] Pulled k8s.gcr.io/kube-apiserver:v1.13.1 [config/images] Pulled k8s.gcr.io/kube-controller-manager:v1.13.1 [config/images] Pulled k8s.gcr.io/kube-scheduler:v1.13.1 [config/images] Pulled k8s.gcr.io/kube-proxy:v1.13.1 [config/images] Pulled k8s.gcr.io/pause:3.1 [config/images] Pulled k8s.gcr.io/etcd:3.2.24 [config/images] Pulled k8s.gcr.io/coredns:1.2.6 |

...

Configure OS

Disable firewall

> systemctl disable firewalld

> systemctl stop firewalld

> systemctl status firewalldFix for error: FileContent--proc-sys-net-bridge-bridge-nf-call-iptables

> vi /etc/sysctl.conf| Code Block |

|---|

net.bridge.bridge-nf-call-iptables = 1 |

> sudo sysctl -p

Disable SWAP

> swapoff -va

> vi /etc/

> swapoff -va

> vi /etc/fstab

|

> reboot

Initialize Master (using Flannel)

> sudo kubeadm init --apiserver-advertise-address <IP ADDRESS> --pod-network-cidr=10.244.0.0/16

| Code Block |

|---|

[init] Using Kubernetes version: v1.13.2

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Activating the kubelet service

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [deepthought kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.1.50]

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [deepthought localhost] and IPs [192.168.1.50 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [deepthought localhost] and IPs [192.168.1.50 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 26.002483 seconds

[uploadconfig] storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.13" in namespace kube-system with the configuration for the kubelets in the cluster

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "deepthought" as an annotation

[mark-control-plane] Marking the node deepthought as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node deepthought as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 0s0oa4.2i5lo5vyuyvbnze6

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstraptoken] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstraptoken] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstraptoken] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstraptoken] creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join 192.168.1.50:6443 --token 0s0oa4.2i5lo5vyuyvbnze6 --discovery-token-ca-cert-hash sha256:20b8104c05927611df68ebb0eb9fbf8f65d3b85d2e57de9ecc5468e5369b9c22

|

Record the kubeadm join command!

As your non root user:

| Code Block |

|---|

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

|

Verify that your network is on the right network interface

Build the Load Balancer

Set Hostname

> sudo hostnamectl set-hostname k8slb

> sudo hostnamectl

Update Yum

> yum update

Disable firewall

> systemctl disable firewalld

> systemctl stop firewalld

> systemctl status firewalldInstall haproxy

> yum install haproxy

> vi /etc/haproxy/haproxy.cfg

| Code Block | ||||

|---|---|---|---|---|

| ||||

global

...

defaults

...

#---------------------------------------------------------------------

# main frontend which proxys to the backends

#---------------------------------------------------------------------

frontend kubernetes

bind *:6443

mode tcp

default_backend kubernetes-master-nodes

#---------------------------------------------------------------------

# static backend for serving up images, stylesheets and such

#---------------------------------------------------------------------

backend kubernetes-master-nodes

mode tcp

balance roundrobin

option tcp-check

server k8smaster1 172.20.233.181:6443 check fall 3 rise 2

server k8smaster2 172.20.233.182:6443 check fall 3 rise 2

server k8smaster3 172.20.233.183:6443 check fall 3 rise 2

|

> sudo systemctl start haproxy

> sudo systemctl enable haproxy> sudo systemctl status haproxy

Verify that you can connect

nc -v LOAD_BALANCER_IP 6443

> nc -v 172.20.233.180 6443

...

Build a K8sMaster1 Node

Login to your Master node

> ssh test@172.20.233.181

Set the hostname

> sudo hostnamectl set-hostname k8smaster1

> sudo hostnamectlGenerate SSH Key

As test:

> ssh-keygen -t rsa -b 2048

Copy to other nodes

> ssh-copy-id test@172.20.233.182

...

> ssh-copy-id test@172.20.233.186

As root:

> sudo su

> ssh-keygen -t rsa -b 2048

Copy to other nodes

> ssh-copy-id test@172.20.233.182

...

> ssh-copy-id test@172.20.233.186

Create kubeadm-config file

> vi kubeadm-config.yaml

| Code Block |

|---|

apiVersion: kubeadm.k8s.io/v1beta1

kind: ClusterConfiguration

kubernetesVersion: stable

apiServer:

certSANs:

- "k8slb.ott.dev.intra"

controlPlaneEndpoint: "k8slb.ott.dev.intra:6443"

networking:

podSubnet: 10.244.0.0/16 |

Initialize Master (using Flannel)

> sudo kubeadm init --config=kubeadm-config.yaml | Code Block |

|---|

[init] Using Kubernetes version: v1.13.4

[preflight] Running pre-flight checks

...

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join k8slb.ott.dev.intra:6443 --token ktemf3.pshisb9lspt1i40i --discovery-token-ca-cert-hash sha256:1e737466a59f00083a4ddf43c9fcf446a5b1cee8346afd1565d341fe5dee2c46

|

Record the kubeadm join command!

As your non root user:

| Code Block |

|---|

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

|

Verify that your network is on the right network interface

| Code Block |

|---|

kubectl get pods -o wide --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system coredns-86c58d9df4-8zk5t 0/1 Pending 0 2d3h <none> <none> <none> <none>

kube-system coredns-86c58d9df4-tsftk 0/1 Pending 0 2d3h <none> <none> <none> |

| Code Block |

kubectl get pods -o wide --all-namespaces NAMESPACE NAME <none> kube-system etcd-k8master READY STATUS RESTARTS AGE 1/1 Running 1 IP 2d3h 10.0.3.15 k8master NODE <none> NOMINATED NODE READINESS GATES<none> kube-system corednskube-86c58d9df4apiserver-8zk5tk8master 01/1 PendingRunning 01 2d3h <none>10.0.3.15 <none> k8master <none> <none> kube-system corednskube-controller-86c58d9df4manager-tsftkk8master 01/1 PendingRunning 01 2d3h <none>10.0.3.15 <none> k8master <none> <none> kube-system etcd-k8master kube-proxy-88gdq 1/1 Running 1 2d3h 10.0.3.15 k8master <none> <none> kube-system kube-apiserverscheduler-k8master 1/1 Running 1 2d3h 10.0.3.15 k8master <none> <none> kube-system kube-controller-manager-k8master 1/1 Running 1 2d3h 10.0.3.15 k8master <none> <none> kube-system kube-proxy-88gdq |

Install Flannel Network Plugin

> sudo sysctl net.bridge.bridge-nf-call-iptables=1

> kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/bc79dd1505b0c8681ece4de4c0d86c5cd2643275/Documentation/kube-flannel.yml

NOTE: See https://kubernetes.io/docs/setup/independent/create-cluster-kubeadm/ for details on the various plugins and their setup.

Verify that all of your kubernetes pods are running

> kubectl get pods --all-namespaces

| Code Block |

|---|

NAMESPACE NAME 1/1 Running 1 READY STATUS 2d3h 10.0.3.15RESTARTS AGE kube-system coredns-86c58d9df4-8zk5t 1/1 k8master Running <none> 0 <none>47h kube-system kubecoredns-scheduler86c58d9df4-k8mastertsftk 1/1 Running 10 47h kube-system 2d3h 10.0.3.15etcd-k8master k8master <none> <none> |

Install Flannel Network Plugin

> sudo sysctl net.bridge.bridge-nf-call-iptables=1

> kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/bc79dd1505b0c8681ece4de4c0d86c5cd2643275/Documentation/kube-flannel.yml

NOTE: See https://kubernetes.io/docs/setup/independent/create-cluster-kubeadm/ for details on the various plugins and their setup.

Verify that all of your kubernetes pods are running

> kubectl get pods --all-namespaces

| Code Block |

|---|

NAMESPACE NAME 1/1 Running 1 47h kube-system kube-apiserver-k8master 1/1 Running 1 47h kube-system kube-controller-manager-k8master 1/1 Running 1 47h kube-system kube-flannel-ds-amd64-fl5wp 1/1 Running READY0 STATUS RESTARTS AGE12s kube-system corednskube-86c58d9df4proxy-8zk5t88gdq 1/1 Running 0 47h kube-system coredns-86c58d9df4-tsftk 1/1 Running 01 47h kube-system etcdkube-scheduler-k8master 1/1 Running 1 47h kube-system kube-apiserver-k8master |

Copy Certificates to Other Master Nodes

> sudo su

> vi copyCertsToMasters.sh

| Code Block | ||||

|---|---|---|---|---|

| ||||

USER=test # customizable CONTROL_PLANE_IPS="172.20.233.182 172.20.233.183" for host in ${CONTROL_PLANE_IPS}; do scp /etc/kubernetes/pki/ca.crt "${USER}"@$host: scp /etc/kubernetes/pki/ca.key "${USER}"@$host: scp /etc/kubernetes/pki/sa.key "${USER}"@$host: scp /etc/kubernetes/pki/sa.pub "${USER}"@$host: scp /etc/kubernetes/pki/front-proxy-ca.crt "${USER}"@$host: scp /etc/kubernetes/pki/front-proxy-ca.key "${USER}"@$host: scp /etc/kubernetes/pki/etcd/ca.crt "${USER}"@$host:etcd-ca.crt scp /etc/kubernetes/pki/etcd/ca.key "${USER}"@$host:etcd-ca.key scp /etc/kubernetes/admin.conf "${USER}"@$host: done |

> chmod +x copyCertsToMasters.sh

> ./copyCertsToMasters.sh

Setup Other Master Nodes

Perform the following steps on the other master nodes (k8smaster2 and k8smaster3).

Set the hostname

> sudo su> hostnamectl set-hostname k8smaster1> hostnamectl> reboot

Move Certificates

> ssh test@<ip of master node>

> sudo su

> vi moveFilesFromMaster.sh

| Code Block | ||||

|---|---|---|---|---|

| ||||

USER=test # customizable

mkdir -p /etc/kubernetes/pki/etcd

mv /home/${USER}/ca.crt /etc/kubernetes/pki/

mv /home/${USER}/ca.key /etc/kubernetes/pki/

mv /home/${USER}/sa.pub /etc/kubernetes/pki/

mv /home/${USER}/sa.key /etc/kubernetes/pki/

mv /home/${USER}/front-proxy-ca.crt /etc/kubernetes/pki/

mv /home/${USER}/front-proxy-ca.key /etc/kubernetes/pki/

mv /home/${USER}/etcd-ca.crt /etc/kubernetes/pki/etcd/ca.crt

mv /home/${USER}/etcd-ca.key /etc/kubernetes/pki/etcd/ca.key

mv /home/${USER}/admin.conf /etc/kubernetes/admin.conf |

> chmod +x moveFilesFromMaster.sh

> ./moveFilesFromMaster.sh

Join the Cluster

> sudo su

> kubeadm join k8slb.ott.dev.intra:6443 --token ktemf3.pshisb9lspt1i40i --discovery-token-ca-cert-hash sha256:1e737466a59f00083a4ddf43c9fcf446a5b1cee8346afd1565d341fe5dee2c46 --experimental-control-plane

Notice the addition of the --experimental-control-plane flag. This flag automates joining this control plane node to the cluster.

As your non root user:

|

Verify that the node has joined the cluster:

From the k8smaster1 under the test account issue the following command:

> kubectl get nodes

Setup Worker Nodes

Perform the following steps on all of the worker nodes (k8sworker1, k8sworker2, k8sworker3).

Set the hostname

> sudo su> hostnamectl set-hostname k8sworker1> hostnamectl> reboot

Join the Cluster

From the worker nodes issue the following command. If your token has expired from you may need to create a new one.

> sudo su

> kubeadm join k8slb.ott.dev.intra:6443 --token ktemf3.pshisb9lspt1i40i --discovery-token-ca-cert-hash sha256:1e737466a59f00083a4ddf43c9fcf446a5b1cee8346afd1565d341fe5dee2c46

Verify that the nodes have been added by issuing the following command at the master:

> kubectl get nodes

| Code Block |

|---|

NAME1/1 Running 1 47h kube-system kube-controller-manager-k8master 1/1 Running 1 47h kube-system kube-flannel-ds-amd64-fl5wp 1/1 STATUS ROLES Running 0AGE VERSION k8smaster1 Ready 12s kube-system master kube-proxy-88gdq 27m v1.13.4 k8smaster2 Ready master 8m43s 1/1 v1.13.4 k8smaster3 Ready Running 1master 7m56s 47h kube-system kube-scheduler-k8masterv1.13.4 k8sworker1 Ready <none> 71s 1/1v1.13.4 k8sworker2 Ready Running <none> 1 63s v1.13.4 k8sworker3 47h |

By default, your cluster will not schedule pods on the master for security reasons. If you want to be able to schedule pods on the master, e.g. for a single-machine Kubernetes cluster for development, run:

...

Ready <none> 25s v1.13.4 |

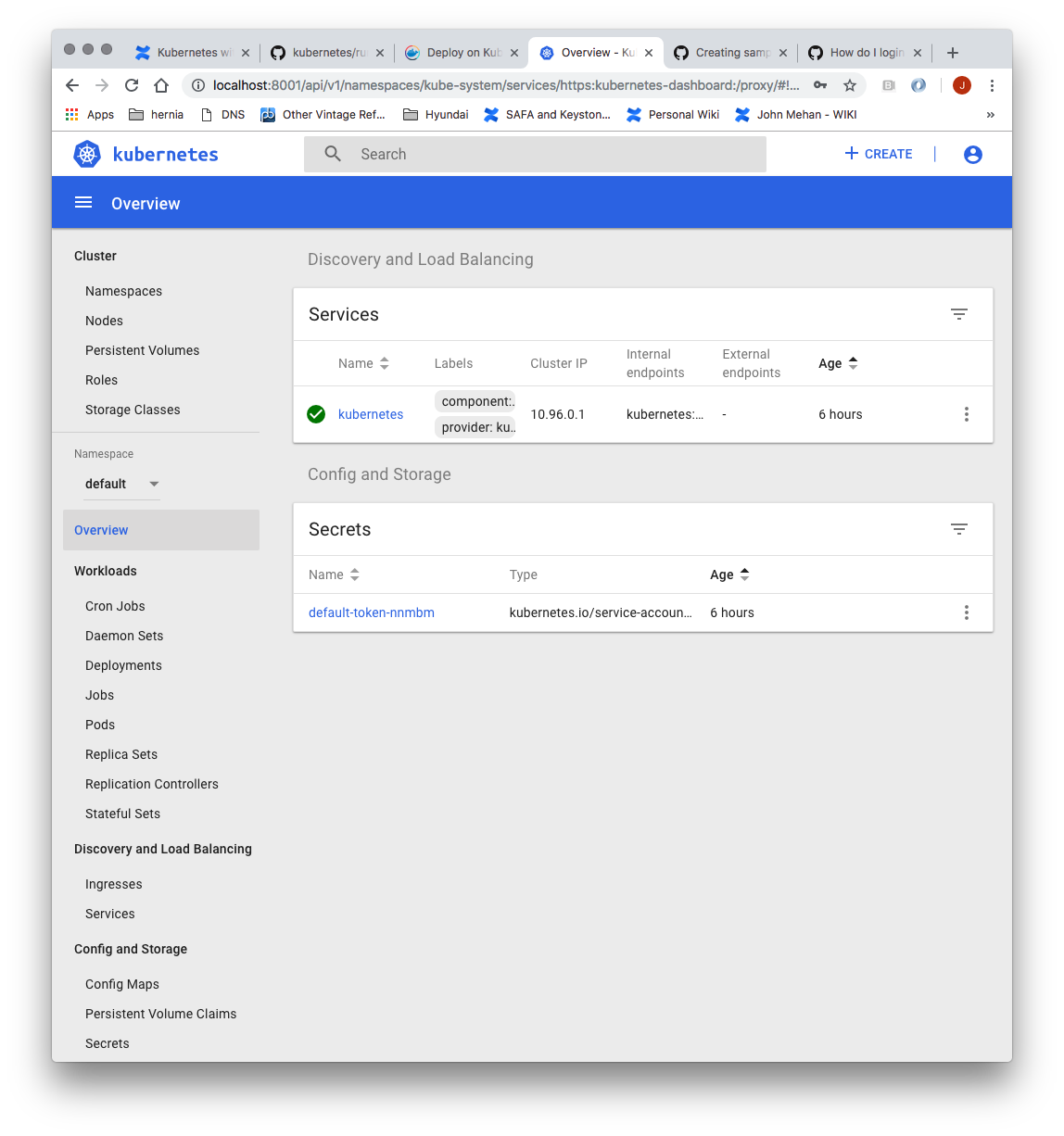

Install Dashboard

From the master node:

| Code Block |

|---|

> kubectl create -f https://raw.githubusercontent.com/kubernetes/dashboard/master/aio/deploy/recommended/kubernetes-dashboard.yaml secret/kubernetes-dashboard-certs created serviceaccount/kubernetes-dashboard created role.rbac.authorization.k8s.io/kubernetes-dashboard-minimal created rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard-minimal created deployment.apps/kubernetes-dashboard created service/kubernetes-dashboard created |

...

| Code Block |

|---|

> kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')

Name: admin-user-token-s6x6d

Namespace:: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: admin-user

kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.nameuid: admin-user

7f55a022-1a05-11e9-a8cb-6c3be541582b

Type: kubernetes.io/service-account-token

Data

====

namespace: 11 bytes

token: kubernetes.io/service-account.uid: 7f55a022-1a05-11e9-a8cb-6c3be541582b

Type: kubernetes.io/service-account-token

Data

====

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLXM2eDZkIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI3ZjU1YTAyMi0xYTA1LTExZTktYThjYi02YzNiZTU0MTU4MmIiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.btoYxamkG_dww0sht85C_txkXr6v0rwISzwkyGT1ivj9MIgrvBG0eF044VOihhWyqjv-lJQOpbFk3TnbDE4QWUr9aFyiQZo_qQGgqSj_NBKWuDw3N0KTjk_siB_3Zb4tOCGe9i0iJ3zwYxDlh5eAD_-YiY-yU8QKY85pJPbTqR4USWiHh4Saj4HEg0TM0EUIToCqF-u9qEF0Y51dc17VW3NKeCEIfarP4x893yzYRnNrbk8ItgjQqln6oQ2AH49dow28fUiGyUCcu1dazXrOr0A_j-Lg-5KaVkT6dXIU5SMMMlrhszrdmrK9akbEP3AdKRErVNosVR_afWdOo8lByA

ca.crt: 1025 bytes |

...

eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLXM2eDZkIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI3ZjU1YTAyMi0xYTA1LTExZTktYThjYi02YzNiZTU0MTU4MmIiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.btoYxamkG_dww0sht85C_txkXr6v0rwISzwkyGT1ivj9MIgrvBG0eF044VOihhWyqjv-lJQOpbFk3TnbDE4QWUr9aFyiQZo_qQGgqSj_NBKWuDw3N0KTjk_siB_3Zb4tOCGe9i0iJ3zwYxDlh5eAD_-YiY-yU8QKY85pJPbTqR4USWiHh4Saj4HEg0TM0EUIToCqF-u9qEF0Y51dc17VW3NKeCEIfarP4x893yzYRnNrbk8ItgjQqln6oQ2AH49dow28fUiGyUCcu1dazXrOr0A_j-Lg-5KaVkT6dXIU5SMMMlrhszrdmrK9akbEP3AdKRErVNosVR_afWdOo8lByA

ca.crt: 1025 bytes |

Access the Dashboard by using the API proxy

From your local machine:

> ssh -L 8001:127.0.0.1:8001 <USER>@<IP>

> kubectl proxy

Browse to:

http://localhost:8001/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy/.

Access the Dashboard using port forwarding

From your local machine:

> ssh -L 8001:127.0.0.1:8001 <USER>@<IP>

> kubectl proxy

8443:127.0.0.1:8443 <USER>@<IP>

> kubectl port-forward $(kubectl -n kube-system get pods |grep kubernetes-dashboard |awk '{print $1}') 8443:8443 --namespace=kube-system

Browse to:

httphttps://localhost:8001/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy/.

Access the Dashboard using port forwarding

From your local machine:

> ssh -L 8443:127.0.0.1:8443 <USER>@<IP>

> kubectl port-forward $(kubectl -n kube-system get pods |grep kubernetes-dashboard |awk '{print $1}') 8443:8443 --namespace=kube-system

Browse to:

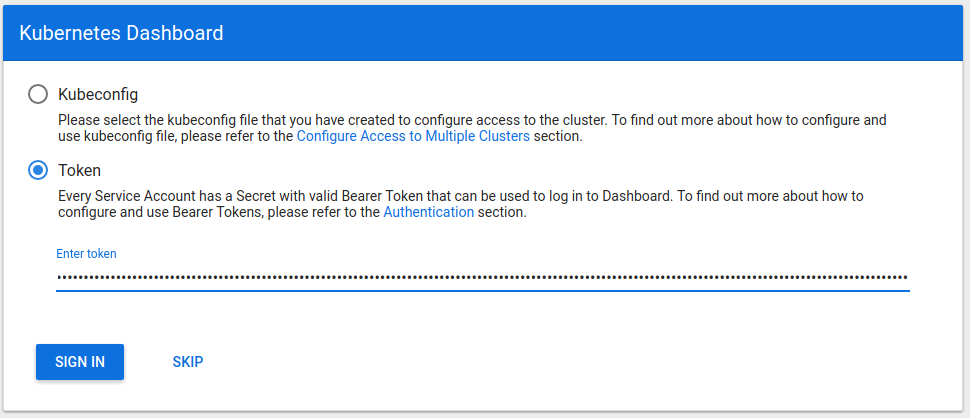

Sign in to the Dashboard

Sign in using the token previously retrieved.

Install Sample Pod

> vi nginx-example.yaml

Sign in to the Dashboard

Sign in using the token previously retrieved.

Install Sample Pod

> vi nginx-example.yaml

| Code Block |

|---|

apiVersion: apps/v1 # for versions before 1.9.0 use apps/v1beta2

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginx

replicas: 3 # tells deployment to run 3 pods matching the template

template:

metadata:

labels:

app: nginx

spec:

containers |

| Code Block |

apiVersion: apps/v1 # for versions before 1.9.0 use apps/v1beta2 kind: Deployment metadata: name: nginx-deployment spec: selector: matchLabels: app- name: nginx replicas: 2 # tells deployment to run 2 pods matching the template template: metadataimage: nginx:1.7.9 ports: labels: - containerPort: 80 --- apiVersion: v1 kind: Service metadata: appname: nginx spec: type: NodePort containersselector: - nameapp: nginx ports: - imageport: nginx:1.7.980 nodePort: ports:31080 - containerPortname: 80nginx |

> kubectl apply -f nginx-example.yaml

Expose your nginx pods via a nodePort

> kubectl expose deployment nginx-deployment --type=NodePort --name=nginx-f nginx-example.yaml

> kubectl get services

| Code Block |

|---|

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 95m5h32m nginx NodePort 10.9899.77190.176114 <none> 80:31490/TCP 119s |

From the above we can see that the nginx service is exposed on port 31490.

Verify by issuing the following command:

> curl http://<NODE_IP>:31490

Troubleshooting

Reset and start all over

> sudo kubeadm reset

References

:31080/TCP 2m46s |

From the above we can see that the nginx service is exposed on port 31080.

Verify by issuing the following command:

> curl http://<NODE_IP>:31080

Troubleshooting

Reset and start all over

> sudo kubeadm reset

Centos Commands

| Cmd | Description |

|---|---|

| hostname -I | Get the ip address |

| ip addr | ifconfig |

References

| Reference | URL | ||

|---|---|---|---|

Install and configure a multi-master Kubernetes cluster with kubeadm | https://blog.inkubate.io/install-and-configure-a-multi-master-kubernetes-cluster-with-kubeadm/ | ||

| High Availability Cluster | https://kubernetes.io/docs/setup/independent/high-availability/ | Reference | URL|

| Building a Kuburnetes Cluster | https://medium.com/@KevinHoffman/building-a-kubernetes-cluster-in-virtualbox-with-ubuntu-22cd338846dd | ||

| Cluster Networking | https://kubernetes.io/docs/concepts/cluster-administration/networking/ | ||

| Creating a single master cluster with kubeadm | https://kubernetes.io/docs/setup/independent/create-cluster-kubeadm/ | ||

| Kubernetes Network Plugins | https://kubedex.com/kubernetes-network-plugins/ | ||

| Flannel | https://github.com/coreos/flannel#flannel | ||

| Dashboard | https://kubernetes.io/docs/tasks/access-application-cluster/web-ui-dashboard/#using-dashboard | ||

| Adding Users to CentOS | https://www.rosehosting.com/blog/how-to-create-a-sudo-user-on-centos-7/ |

...