...

> sudo systemctl start haproxy

> sudo systemctl enable haproxy> sudo systemctl status haproxy

...

> sudo hostnamectl set-hostname k8smaster1 > sudo hostnamectl

Generate SSH Key

As test:

> ssh-keygen -t rsa -b 2048

Copy to other nodes

> ssh-copy-id test@172.20.233.182

...

> ssh-copy-id test@172.20.233.186

As root:

> sudo su

> ssh> ssh-keygen -t rsa -b 2048

Copy to other nodes

> ssh-copy-id id test@172.20.233.182

...

> ssh-copy-id test@172.20.233.186

...

Initialize Master (using Flannel)

> sudo kubeadm init --apiserver-advertise-address <IP ADDRESS> --pod-network-cidr=10.244.0.0/16

> > sudo kubeadm init --config=kubeadm-config.yaml ...

| Code Block |

|---|

[init] Using Kubernetes version: v1.13.4 [preflight] Running pre-flight checks ... Your Kubernetes master has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ You can now join any number of machines by running the following on each node as root: kubeadm join k8slb.ott.dev.intra:6443 --token 80g665ktemf3.bhpvg9w5inpgeimtpshisb9lspt1i40i --discovery-token-ca-cert-hash sha256:2b37c8b0ce18cc9710eb53c7eb7ece209645b02bd906da2f09f26b8f1d29fb9e1e737466a59f00083a4ddf43c9fcf446a5b1cee8346afd1565d341fe5dee2c46 |

Record the kubeadm join command!

...

| Code Block |

|---|

NAMESPACE NAME READY STATUS RESTARTS AGE kube-system coredns-86c58d9df4-8zk5t 1/1 Running 0 47h kube-system coredns-86c58d9df4-tsftk 1/1 Running 0 47h kube-system etcd-k8master 1/1 Running 1 47h kube-system kube-apiserver-k8master 1/1 Running 1 47h kube-system kube-controller-manager-k8master 1/1 Running 1 47h kube-system kube-flannel-ds-amd64-fl5wp 1/1 Running 0 12s kube-system kube-proxy-88gdq 1/1 Running 1 47h kube-system kube-scheduler-k8master 1/1 Running 1 47h |

By default, your cluster will not schedule pods on the master for security reasons. If you want to be able to schedule pods on the master, e.g. for a single-machine Kubernetes cluster for development, run:

> kubectl taint nodes --all node-role.kubernetes.io/master-

Copy Certificates to Master Nodes

> sudo su

Copy Certificates to Other Master Nodes

> sudo su

> > vi copyCertsToMasters.sh

| Code Block | ||||

|---|---|---|---|---|

| ||||

USER=test # customizable

CONTROL_PLANE_IPS="172.20.233.182 172.20.233.183"

for host in ${CONTROL_PLANE_IPS}; do

scp /etc/kubernetes/pki/ca.crt "${USER}"@$host:

scp /etc/kubernetes/pki/ca.key "${USER}"@$host:

scp /etc/kubernetes/pki/sa.key "${USER}"@$host:

scp /etc/kubernetes/pki/sa.pub "${USER}"@$host:

scp /etc/kubernetes/pki/front-proxy-ca.crt "${USER}"@$host:

scp /etc/kubernetes/pki/front-proxy-ca.key "${USER}"@$host:

scp /etc/kubernetes/pki/etcd/ca.crt "${USER}"@$host:etcd-ca.crt

scp /etc/kubernetes/pki/etcd/ca.key "${USER}"@$host:etcd-ca.key

scp /etc/kubernetes/admin.conf "${USER}"@$host:

done |

...

> ./copyCertsToMasters.sh

...

Setup Other Master Nodes

Perform the following steps on the other master nodes (k8smaster2 and k8smaster3).

Set the hostname

> sudo su> hostnamectl set-hostname k8smaster1> hostnamectl> reboot

Move Certificates

> ssh test@<ip of master node>> ssh test@172.20.233.182

> sudo su

> vi moveFilesFromMaster.sh

...

> ./moveFilesFromMaster.sh

Join the

...

Cluster

From k8smaster2 and k8smaster3 issue the following commands:

> sudo su

> kubeadm > kubeadm join k8slb.ott.dev.intra:6443 --token 80g665ktemf3.bhpvg9w5inpgeimt pshisb9lspt1i40i --discovery-token-ca-cert-hash sha256:2b37c8b0ce18cc9710eb53c7eb7ece209645b02bd906da2f09f26b8f1d29fb9e 1e737466a59f00083a4ddf43c9fcf446a5b1cee8346afd1565d341fe5dee2c46 --experimental-control-plane

...

Notice the addition of the --experimental-control-plane flag. This flag automates joining this control plane node to the cluster.

As your non root user:

|

Verify that the Verify that the node has joined the cluster:

From the k8smaster1 under the test account issue the following command:> kubectl get pod -n kube-system -wthe following command:

> kubectl get nodes

Setup Worker Nodes

Perform the following steps on all of the worker nodes (k8sworker1, k8sworker2, k8sworker3).

Set the hostname

> sudo su> hostnamectl set-hostname k8sworker1> hostnamectl> reboot

Join the Cluster

From the worker nodes issue the following command. If your token has expired from you may need to create a new one.

> sudo su

> kubeadm join k8slb.ott.dev.intra:6443 --token ktemf3.pshisb9lspt1i40i --discovery-token-ca-cert-hash sha256:1e737466a59f00083a4ddf43c9fcf446a5b1cee8346afd1565d341fe5dee2c46

Verify that the nodes have been added by issuing the following command at the master:

> kubectl get nodes

| Code Block |

|---|

NAME STATUS ROLES AGE VERSION

k8smaster1 Ready master 27m v1.13.4

k8smaster2 Ready master 8m43s v1.13.4

k8smaster3 Ready master 7m56s v1.13.4

k8sworker1 Ready <none> 71s v1.13.4

k8sworker2 Ready <none> 63s v1.13.4

k8sworker3 Ready <none> 25s v1.13.4 |

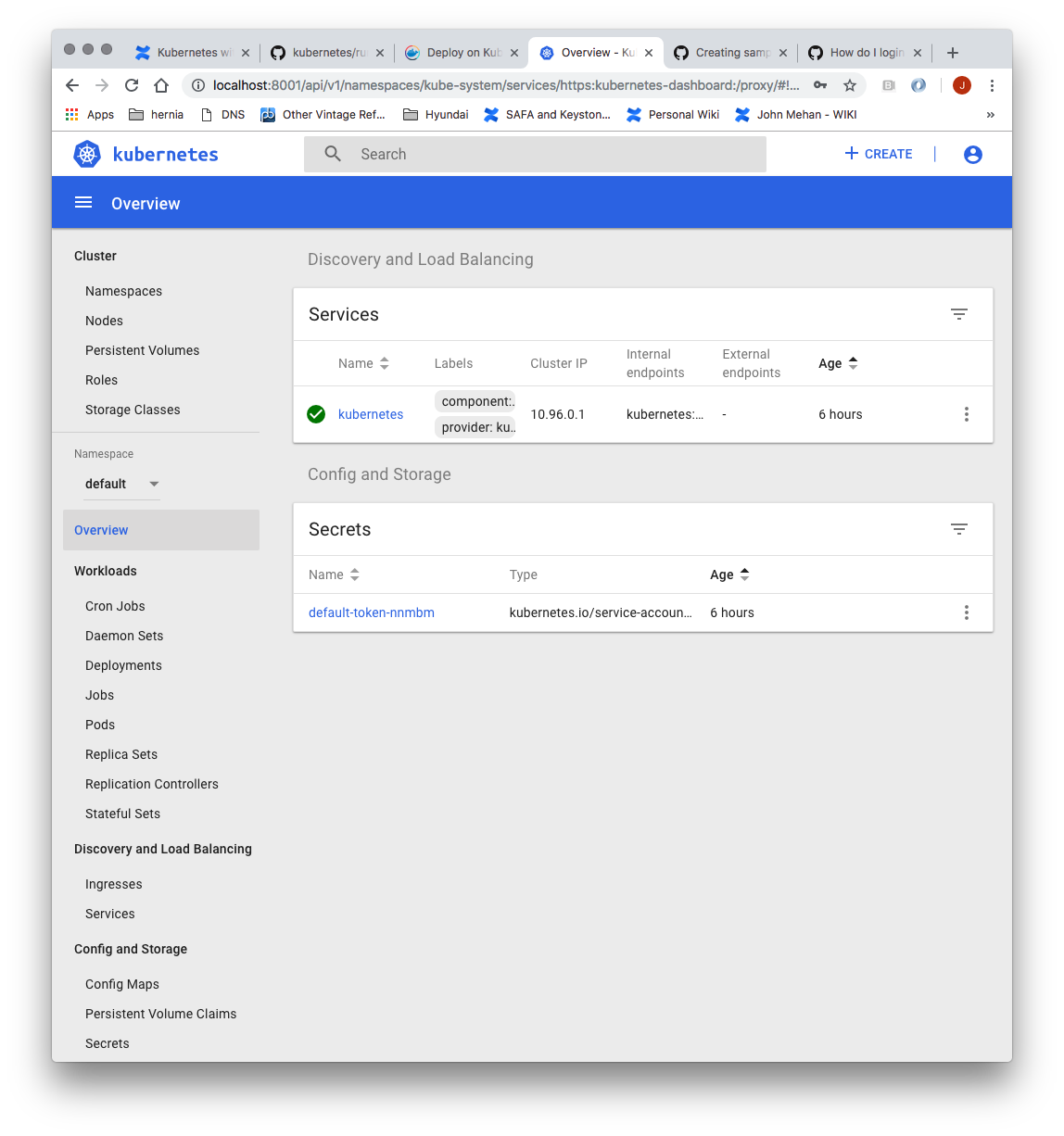

Install Dashboard

From the master node:

| Code Block |

|---|

> kubectl create -f https://raw.githubusercontent.com/kubernetes/dashboard/master/aio/deploy/recommended/kubernetes-dashboard.yaml secret/kubernetes-dashboard-certs created serviceaccount/kubernetes-dashboard created role.rbac.authorization.k8s.io/kubernetes-dashboard-minimal created rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard-minimal created deployment.apps/kubernetes-dashboard created service/kubernetes-dashboard created |

...

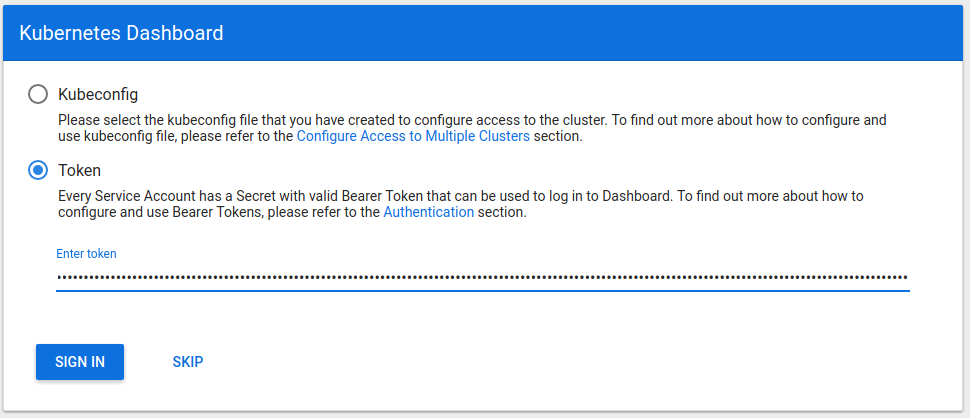

Sign in using the token previously retrieved.

Install Sample Pod

> vi nginx-example.yaml

| Code Block |

|---|

apiVersion: apps/v1 # for versions before 1.9.0 use apps/v1beta2

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginx

replicas: 23 # tells deployment to run 23 pods matching the template

template:

metadata:

labels:

app: nginx

spec:

metadatacontainers:

labels- name: nginx

appimage: nginx:1.7.9

spec ports:

containers:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

- name: nginx

spec:

type: NodePort

selector:

image app: nginx:1.7.9

ports:

- port: 80

portsnodePort: 31080

- containerPortname: 80nginx |

> kubectl apply -f nginx-example.yaml

Expose your nginx pods via a nodePort

> kubectl expose deployment nginx-deployment --type=NodePort --name=nginx

> kubectl get services

| Code Block |

|---|

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 95m5h32m nginx NodePort 10.9899.77190.176114 <none> 80:3149031080/TCP 119s2m46s |

From the above we can see that the nginx service is exposed on port 3149031080.

Verify by issuing the following command:

> curl http://<NODE_IP>:31490:31080

Troubleshooting

Reset and start all over

...